In this post I’m going to outline the contents of my third chapter. This chapter is partially based on a paper I have in Philosophical Explorations. I now think that this paper is mistaken in some important respects, however. This thesis chapter does a much better job on this topic.

Chapter Aim

The main aim of the chapter is to extract three insights from the mid-twentieth century Cambridge psychologist, philosopher, and control theorist Kenneth Craik:

- an account of mental representation in terms of idealised models in the brain that share an abstract structure with target domains in the body and world.

- an appreciation of prediction as the core function of such models.

- a regulatory (i.e. cybernetic) understanding of brain function.

The rest of the thesis then builds on and clarifies these ideas, relates them to contemporary advances in cognitive science, and spells out some of their important implications and limitations.

Kenneth Craik

In the previous chapter I suggested that a theory of mental representation should be exclusively motivated by the explanatory constraints of our best cognitive science. It might seem odd, then, turning to the views of an obscure psychologist from the 1940s. Nevertheless, there are at least two reasons for including Craik’s work in my thesis.

- Craik wrote in a period of maximal scepticism about mental representations in both psychology and philosophy. Nevertheless, he felt that non-representational psychology is bereft of the theoretical tools to explain intelligence. I think that Craik’s basic motivation for positing mental representations—namely, the importance of prediction to adaptive success—was deeply insightful, and it is a theme that I return to in later chapters.

- I think that Craik was basically right! That is, I think that an account of (at least some important aspects of) mental representation in terms of idealised models in the brain that capitalize on structural similarity to bodily and environmental targets in the service of prediction is pretty well-supported by recent advances in neuroscience and cognitive psychology.

Biography

Before I get to the main part of the post, I feel compelled to give a brief bio of Kenneth Craik, given the general ignorance of him and his work. (Here and here are some good articles).

- Craik studied philosophy and psychology as an undergraduate at Edinburgh University.

- He then went to Cambridge. Before he arrived there, his supervisor from Edinburgh told Sir Frederic Bartlett, “Next term I am going to send you a genius!”

- He did pioneering work on visual psychology and physiology at Cambridge.

- He was heavily involved in the design of military technologies during the Second World War.

- For this, he was appointed the first Director of the Medical Research Council’s Applied Psychology Unit in 1944.

- He died in a bicycling accident less than a year later two days before VE day. He was 31 years old.

Craik is mostly known (insofar as he is known at all) for a philosophical/psychological monograph ‘The Nature of Explanation’. Another book of unfished writings was also published in 1966, called ‘The Nature of Psychology,’ which is fantastic. I draw on both in the thesis chapter.

Craik’s distinctively philosophical views—industrial strength realism about the external world, a staunch commitment to naturalism and a disdain for conceptual analysis and a priori philosophy, a commitment to the importance of causal explanations in science—were all ahead of his time, but I won’t dwell on them here.

(A short aside: unlike, say, philosophers such as Wittgenstein and Heidegger from the same era, Craik’s work was commonsensical, unpretentious, deeply knowledgeable about science, and extremely prescient, i.e. it actually got a lot right about how the mind works. For this, he is rewarded by being basically forgotten in philosophy. There is a lesson about philosophy in that somewhere, although I’m not sure it’s an encouraging one.)

Craik’s “Hypothesis on the Nature of Thought”

If people know anything about Craik’s work, it is usually this famous quote:

“If the organism carries a “small-scale model” of external reality and of its own possible actions within its head, it is able to try out various alternatives, conclude which is the best of them, react to future situations before they arise, utilise the knowledge of past events in dealing with the present and future, and in every way to react in a much fuller, safer, and more competent manner to the emergencies which face it.”

I will briefly unpack what I think are three core insights from his work.

- Mental Representations as Mental Models

The first insight that I extract from Craik’s work is this:

- We should understand mental representation in terms of models in the brain that share an abstract structure with target domains in the body and world.

The idea that models in general are representations that capitalize on structural similarity to their targets—that a model “has a similar relation-structure to that of the process it imitates”—is a common idea that I will be returning to at greater length in future chapters (posts).

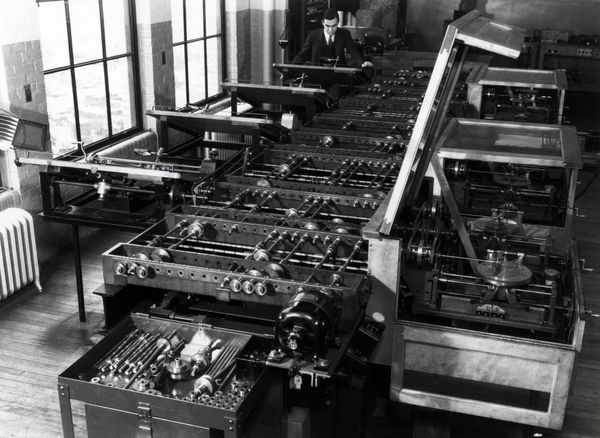

Although Craik does appeal to ordinary scale models to illustrate this point (where the structural similarity is most obvious), the chief examples he draws on are the analogue computers prominent in his day. For example, he draws on Lord Kelvin’s tide predictor, an analogue computing machine that exploited a complex system of pulleys and wires to predict sea tides, as well as the so-called “differential analyser,” the world’s first general-purpose analogue computer, which can be seen here:

Although the details of how such machines work is fiendishly complicated, they exhibit a certain property that greatly impressed Craik: although they look nothing like the systems they are designed to predict the behaviour of, they nevertheless ground a flexible predictive capacity by cleverly arranging material parts and operations so as to mimic or simulate the dynamics of such systems.

In this sense analogue computers employ analogues of the systems that they are used to reason about. As a popular textbook on analogue computers puts it,

“the components of each computer… are assembled to permit the computer to perform as a model, or in a manner analogous to some other physical system.”

For Craik, this suggested that there is no in-principle reason why the brain could not work in a similar way. That is, although the brain looks nothing like the kinds of things that we interact with, it could—in principle, at least—be configured in such a way as to mimic processes in the world.

This might seem commonsense—even banal—now. At the time, however, it suggested a concrete hypothesis about how we might employ mental representations that didn’t draw on any spooky “mental pictures” or little homunculi inside the minds. Instead, Craik’s hypothesis was just that the brain is capable of implementing processes that model—that share an abstract structure with—target processes in the body and world.

Of course, the fact that this hypothesis is coherent does not provide any reason for thinking that it is true. Why think that there are such models inside the brain?

Craik was exquisitely sensitive to this worry:

“Some may object that this reduces thought to a mere “copy” of reality and that we ought not to want such an internal “copy”; are not electrons, causally interacting, good enough?”

(Compare: “isn’t the world its own best model?”)

Craik’s answer was simple:

“only this internal model of reality—this working model—enables us to predict events which have not yet occurred in the physical world, a process which saves time, expense, and even life.”

- The Predictive Mind

Craik’s commitment to the importance of models in the brain is not motivated by a commitment to the importance of, say, pattern recognition, feature detection, or abstract logical reasoning. Rather, he thinks that such models provide the best explanation of a kind of capacity that he thought the behaviourists of his day wrongfully neglected: prediction.

For Craik, “one of the most fundamental properties of thought is its power of predicting events,” which gives it is “immense and constructive significance.”

Importantly, Craik thought this predictive competence extends far beyond just human beings.

This raises two questions:

Why think that prediction requires internal models?

Why think that prediction is so central to adaptive success?

Prediction and Internal Representations

On the one hand, the idea that prediction requires representation of some kind seems pretty intuitive:

- Most nonrepresentational paradigms in psychology have put forward highly reactive accounts of intelligence (e.g. behaviourism, Brooks’ 1990s robots).

- Prediction paradigmatically concerns what is going to happen. But you can’t interact “directly” with such non-actual states. Thus it seems plausible that you must employ something that stands in for—i.e. represents—those states.

On the other hand, any simple link between prediction and representation is bogus:

- It’s important not to conflate prediction in general with conscious, personal-level predictions like “Trump will lose the next election.” The kind of prediction that Craik had in mind concerned predictive or anticipatory capacities in general.

- Even good old-fashioned behaviourism could account for a kind of “prediction” (in all important quote marks). Pavlov’s dog hears the bell and “predicts” food. Through reinforcement learning, an animal learns which behaviours “predict” rewards/punishments.

Flexible Prediction

These objections help to illustrate something important about Craik’s emphasis on prediction: he focused on a kind of flexible predictive capacity that goes far beyond the mere responsiveness to correlations described by behaviourists. Unfortunately, Craik didn’t really do a particularly good job at clarifying what this extra flexibility consists in. In previous work I suggested that his focus was on what I called “counterfactual competence,” the capacity to model the causal structure of the world in such a way that an animal can generate predictions about the likely outcomes of a wide range of merely possible interventions.

I now think that things are a bit messier. That is, I think that Craik had in mind a wide range of potential uses of prediction:

- The role of prediction in what is now called “model-based reinforcement learning,” i.e. choosing actions based on explicit predictions of their likely effects (rather than just repeating whatever behaviours have been reinforced in the past).

- The capacity of the brain to predict the likely sensory consequences of the organism’s behaviour in order to overcome signalling delays.

- Flexible predictive tracking mechanisms.

- The ability to use predictive models to represent features of the world the organism does not currently have access to.

Nevertheless, I will not dwell on this exegetical issue here. What I think is important in Craik’s work—and it’s a theme that I will return to later—is the insight that mental models provide fuel for a very specific kind of success: predictive success.

The Cybernetic Brain

Craik’s commitment to the importance of modelling the world might suggest a commitment to an influential metaphor of the brain as a kind of scientist, an idea that goes back at least to Helmholtz. According to this view, brains function kind of like an idealised scientist, formulating hypotheses about the distal world and testing these hypotheses against sensory evidence to progressively arrive at increasingly more accurate models of reality.

(ASIDE: Helmholtz is often credited as the “grandfather” of current interest in the “predictive mind.” In fact, I think that there is a confusion in the neuroscientific literature between a vision of the brain as a predictive mechanism and the brain as an inferential one. Whist the former (I think) implies the latter, the latter doesn’t necessarily imply the former. As far as I can see, Helmholtz was really one of the originators of a constructivist theory of perception, according to which perception is a process of knowledge-driven inference. But ampliative inference of that kind can be accomplished in purely feedforward architectures without anything resembling prediction. See, e.g., Marr’s theory of vision or contemporary deep feedforward nets).

In fact, I don’t think that Craik would have accepted anything like this “brain as scientist” metaphor. He evidently thought that the ultimate use of mental models is much more pragmatic than that. Unlike scientists—that is, idealised scientists with no counterpart in the actual world—who are concerned only with truth for truth’s sake, the brain is first and foremost an engine of adaptive success: that is, an engine for getting things done.

In previous work I suggested that the “brain as an engineer” might therefore provide a better analogy for understanding Craik’s work. I now think that this analogy is just silly. No doubt the brain is in an important sense the product of something akin to engineering (i.e. through evolution), but—beyond the combined use of modelling and pragmatism—the analogy breaks down.

In any case, I subsequently discovered that Craik himself offered a much less metaphorical conception of brain function in unpublished work. This was a regulatory understanding of brain function, the third insight that I extract from Craik’s work.

The Brain as Regulator

Craik was among a group of theorists and engineers in the 1940s—many of them deeply involved with the design of military technology during the Second World War—who gave rise to the field of cybernetics, the study of regulatory systems.

In its initial form, at least, cybernetics sought to understand self-organization and adaptive behaviour in terms of cyclical processes in which information about the outcomes of a system’s actions is detected and compared to goal states to either cease, change, or continue those actions.

As Craik put it, error-correcting teleological mechanisms in this sense

“show behaviour which is determined not just by the external disturbances acting on them and their internal store of energy, but by the relation between their disturbed state and some assigned state of equilibrium.”

For Craik (and other cyberneticists), this suggested a general conception of the brain as what Craik called “an element in a control system” or an “automatic regulating system.” Its task is to effectively coordinate the organism’s behaviour with the world in such a way as to preserve the organism’s structural integrity and the constancy of its internal states (i.e. “homeostasis,” broadly construed).

“Living organisms,” Craik (1966, pp.12-3) writes, are

“in a state of dynamic equilibrium with their environments. If they do not maintain this equilibrium they die; if they do maintain it they show a degree of spontaneity, variability, and purposiveness of response unknown in the non-living world. This is what is meant by “adaptation to environment” … [Its] essential feature… is stability—that is, the ability to withstand disturbances.”

Because this blog post is far too long already, I will bring things to a close here. The basic idea, which I outline in this chapter and then develop in later chapters, is that mental mechanisms—including the predictive modelling identified by Craik—can ultimately be understood in terms of an ultimately pragmatic goal: namely, maintaining the organism within that narrow set of possible states consistent with its survival.

To be honest, this is the main element of my thesis that I am reaaally unsure about, and I wasn’t sure whether to keep it in. In fact, I can think of a few hundred objections to this regulatory account of brain function (which I expect to receive in my viva. eek).

Nevertheless, I included it in the thesis for a few reasons:

- Its nice connection with Craik’s work.

- The connection with certain themes from predictive processing and the “free energy principle” that I turn to in later chapters (posts).

- It sets up a theoretical perspective that identifies the deeply pragmatic origins of our mental models—a theme I also turn to in later chapters, when I consider the way in which we model the world only insofar as it matters to us as specific kinds of organisms. (See Chapter 9: “Modelling the Umwelt: Structural Representation and the Manifest Image”).

CONCLUSION

To summarise, then, I argue that one can extract from Craik’s work a kind of proto-theory of mental representation founded on neurally realised predictive models in the brain that share an abstract structure with processes in the body and environment—predictive models that ultimately work in the service of enabling intelligent animals to regulate their internal milieu.

In future chapters (posts), I will elaborate on the functional profile of models (Ch.4), show how model-based representation should be understood in the context of neural mechanisms (Ch.5), relate these ideas to research in the contemporary cognitive sciences (Chs. 6 and 7), and then draw out the implications of a predictive model-based theory of mental representation for foundational questions concerning mental representation in philosophy and cognitive science.

If you’ve made it this far, thanks for reading!